Privacy complaints received by tech giants’ favorite EU watchdog up more than 2x since GDPR

A report by the lead data watchdog for a large number of tech giants operating in Europe shows a significant increase in privacy complaints and data breach notifications since the region’s updated privacy framework came into force last May.

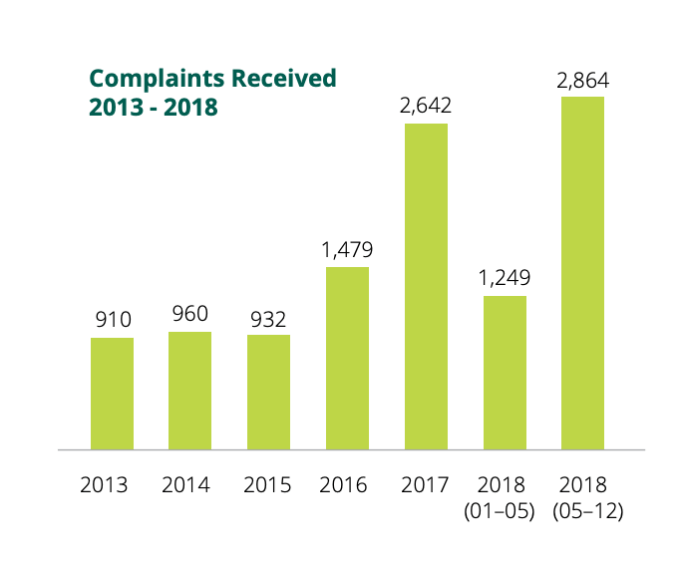

The Irish Data Protection Commission (DPC)’s annual report, published today, covers the period May 25, aka the day the EU’s General Data Protection Regulation (GDPR) came into force, to December 31 2018 and shows the DPC received more than double the amount of complaints post-GDPR vs the first portion of 2018 prior to the new regime coming in: With 2,864 and 1,249 complaints received respectively.

That makes a total of 4,113 complaints for full year 2018 (vs just 2,642 for 2017). Which is a year on year increase of 36 per cent.

But the increase pre- and post-GDPR is even greater — 56 per cent — suggesting the regulation is working as intended by building momentum and support for individuals to exercise their fundamental rights.

“The phenomenon that is the [GDPR] has demonstrated one thing above all else: people’s interest in and appetite for understanding and controlling use of their personal data is anything but a reflection of apathy and fatalism,” writes Helen Dixon,Ireland’s commissioner for data protection.

She adds that the rise in the number of complaints and queries to DPAs across the EU since May 25 demonstrates “a new level of mobilisation to action on the part of individuals to tackle what they see as misuse or failure to adequately explain what is being done with their data”.

While Europe has had online privacy rules since 1995 a weak regime of enforcement essentially allowed them to be ignored for decades — and Internet companies to grab and exploit web users’ data without full regard and respect for European’s privacy rights.

But regulators hit the reset button last year. And Ireland’s data watchdog is an especially interesting agency to watch if you’re interested in assessing how GDPR is working, given how many tech giants have chosen to place their international data flows under the Irish DPC’s supervision.

More cross-border complaints

“The role places an important duty on the DPC to safeguard the data protection rights of hundreds of millions of individuals across the EU, a duty that the GDPR requires the DPC to fulfil in cooperation with other supervisory authorities,” the DPC writes in the report, discussing its role of supervisory authority for multiple tech multinationals and acknowledging both a “greatly expanded role under the GDPR” and a “significantly increased workload”.

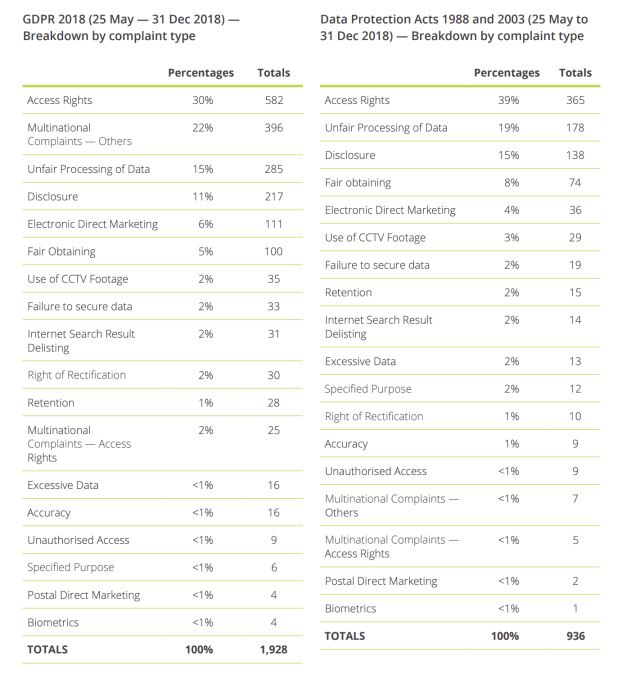

A breakdown of GDPR vs Data Protection Act 1998 complaint types over the report period suggests complaints targeted at multinational entities have leapt up under the new DP regime.

For some complaint types the old rules resulted in just 2 per cent of complaints being targeted at multinationals vs close to a quarter (22 per cent) in the same categories under GDPR.

It’s the most marked difference between the old rules and the new — underlining the DPC’s expanded workload in acting as a hub (and often lead supervisory agency) for cross-border complaints under GDPR’s one-stop shop mechanism.

The category with the largest proportions of complaints under GDPR over the report period was access rights (30%) — with the DPC receiving a full 582 complaints related to people feeling they’re not getting their due data. Access rights was also most complained about under the prior data rules over this period.

Other prominent complaint types continue to be unfair processing of data (285 GDPR complaints vs 178 under the DPA); disclosure (217 vs 138); and electronic direct marketing (111 vs 36).

EU policymakers’ intent with GDPR is to redress the imbalance of weakly enforced rights — including by creating new opportunities for enforcement via a regime of supersized fines. (GDPR allows for penalties as high as up to 4 per cent of annual turnover, and in January the French data watchdog slapped Google with a $57M GDPR penalty related to transparency and consent — albeit still far off that theoretical maximum.)

Importantly, the regulation also introduced a collective redress option which has been adopted by some EU Member States.

This allows for third party organizations such as consumer rights groups to lodge data protection complaints on individuals’ behalf. The provision has led to a number of strategic complaints being filed by organized experts since last May (including in the case of the aforementioned Google fine) — spinning up momentum for collective consumer action to counter rights erosion. Again that’s important in a complex area that remains difficult for consumers to navigate without expert help.

For upheld complaints the GDPR ‘nuclear option’ is not fines though; it’s the ability for data protection agencies to order data controllers to stop processing data.

That remains the most significant tool in the regulatory toolbox. And depending on the outcome of various ongoing strategic GDPR complaints it could prove hugely significant in reshaping what data experts believe are systematic privacy incursions by adtech platform giants.

And while well-resourced tech giants may be able to factor in even very meaty financial penalties, as just a cost of doing a very lucrative business, data-focused business models could be far more precarious if processors can suddenly be slapped with an order to limit or even cease processing data. (As indeed Facebook’s business just has in German

Be the first to write a comment.